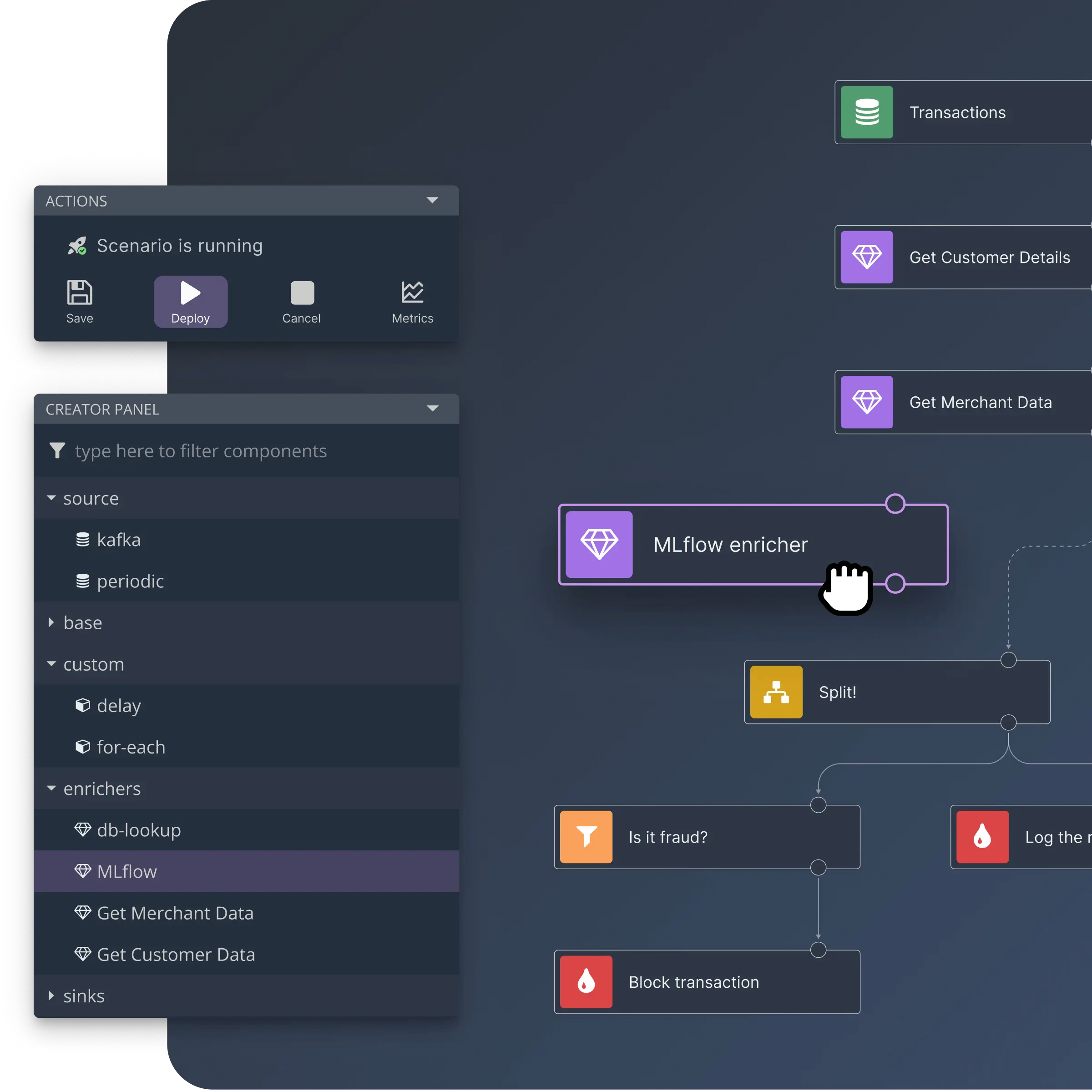

Build on Flink without the complexity. Design real-time streaming jobs in a visual editor, deploy with one click, and let your team iterate without touching Flink code.

Engineers set up integrations. Business teams own the logic.

No credit card required

Free Cloud · self-hosted OSS · See pricing